Much of the focus of the debate on global warming has been on the level of carbon dioxide emissions. There is very good reason for this, considering how much C02 we are pumping into the atmosphere and its proven relationship with average global temperature. Yet, of course, carbon dioxide is by no means the only culprit, only the most abundant and significant contributor. Another, far more potent greenhouse gas is methane (CH4), which, depending who you listen to, is between twenty and thirty times more potent than C02. Its presence in the atmosphere is rapidly growing. Indeed, according to recent research headed by Natalia Shakhova, we might be on the brink of a tipping point, the result of a massive environmental feedback in the Arctic.

Firstly, I’d like to say something about climate change prediction models. Most climate models have focussed on the shorter term, namely, the 21st century, with few daring to venture into the 22nd or 23rd centuries and beyond to predict global atmospheric and climatic conditions. It goes without saying that such modelling is fraught with uncertainty, especially considering our relatively limited understanding of environmental feedback mechanisms. This inclines most climate models to be overly conservative in their predictions, especially in the case of sea-level rise. The IPCC’s calculations in its fourth assessment of sea-level rise, based largely on melting ice and thermal expansion of water, did not factor in dynamic processes such as calving ice-sheets and the observed acceleration of ice-loss and melting, effects which are less easy to predict and model. Their most recent figure of an 18-59cm rise in sea-level by 2100 falls short when we use a different measuring stick – average global temperature relative to sea-level.

With the planet currently trending at the highest end of greenhouse gas emission scenarios, bearing in mind the strong relationship between atmospheric carbon dioxide levels and global temperature, a more likely outcome is a sea-level rise of between 75 to 190cm. It is worth noting that a sea-level rise of one metre would be devastating for low-lying coastal regions, such as The Netherlands, Florida, Bangladesh and Shanghai to name a few. It’s all very well to argue over the numbers, which, at this stage, seem so abstract, yet their manifestation in reality would be akin to a vast global crisis, potentially making some of the most populous regions of the planet effectively uninhabitable. Humans will no doubt battle it very effectively initially, but if major cities are subjected to consistent flooding, it will be very difficult to sustain year-round economic activity and industrial output will decline, as might coastal infrastructure. It is by no means impossible that major metropolises will eventually have to be abandoned.

There is another fundamental problem with our shorter-term climate modelling. Scientists may talk of a potential sea-level rise by the end of the 21st century, but where will that leave us at the end of the 22nd century? In a warmer planet, ice-melt is not about to stop at some arbitrary date that humans see as a convenient cap for current predictions. If, as has so far been observed, the rate of melt increases as the temperature increases and sea-level rise accelerates towards a worst-case outcome by the year 2100, then what of the subsequent century? Can we expect to add a further two metres, or three perhaps? And what of the very long term?

Of course, the idea is to achieve a zero carbon global economy by the end of the 21st century. I don’t mean to be overly cynical, but the idea seems, at this stage, so utterly fanciful that it’s quite difficult to accept. Humans will, in all likelihood, continue to use fossil fuels as long as they can dig them up. Fears of peak oil have been pushed significantly back as the vast reserves trapped in tar sands have been factored in. As is discussed below, there are vast methane reserves in the Arctic. I expect this planet will be very much a going concern in the middle of the next century. When food hits the roof, we’ll clear out the remaining 82% of the Amazon and plant it all with crops. I hate to say it, but that’s a hell of a lot of good agricultural land. When Chinese capital completes its quasi-colonial infrastructural investment in Africa, the vast forested lands of the Congo basin will be developed and exploited. When the aquifers fail in China and India, they’ll desalinate the overlapping sea. Human industrial society is just beginning; it will, in all likelihood, get a great deal bigger. Inequalities will be vast, but both human and industrial resources will be fully shackled to the task with the eternal bribe of hope.

In the short term, the failure of Europe marks the beginning of a decline in European leadership. They will likely become, ultimately, an effete satellite of East Asia. Wealth and power will shift back to India and China, where it resided for the first sixteen centuries of the last two millennia. One thing is for certain, there are going to be a lot of serious hiccups along the way, for, throughout all this, we’ll be pumping out shitloads of carbon.

Presently the level of atmospheric C02 is roughly 392 parts per million (ppm), up from roughly 315 ppm in 1960. Atmospheric levels are now estimated to be at their highest for twenty million years. There is little likelihood of another ice-age occurring any time soon, put it that way.

Carbon dioxide is now increasing in the atmosphere at roughly 2ppm each year, a rate which has picked up considerably since forty years ago, when it was measured at 0.9 ppm / year. We know that during the Eocene period, a mere thirty-eight million years ago, atmospheric levels of carbon dioxide sat at around 2000 parts per million and the average global temperature was roughly ten degrees warmer than today. Indeed, the Earth currently sits in a temperature trough, likely the terminal end of an extended cool period that began at the end of the Eocene, after a lengthy hot period that spanned most of the Cretaceous. The hot period peaked in the Eocene, when there was no ice at the poles and both the Arctic region and the continent of Antarctica were forested with tropical plant species and populated by dinosaurs. As Antarctica drifted south it gradually lost the warming benefits of tropical ocean currents and began to cool. Its isolation at the bottom of the planet from tropical currents might be sufficient to see it retain its ice, even during a significant rise in average global temperatures, but such is by no means clear. One thing is certain, that the poles are warming significantly faster than the tropics and the eventual loss of Antarctic ice is, if not inevitable, certainly plausible in the long term. It is worth noting that during the Eocene, sea-level was, take note, 170 METRES higher than it is today. Have a look at this map:

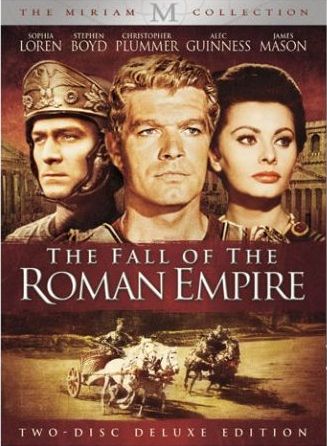

This is clearly a worst case-scenario, yet even should it take two thousand years to melt all the planet’s ice, it’s difficult to imagine anything equally catastrophic having occurred in the previous two thousand years of human history. The fall of the Roman Empire, the Crusades, Black Plague, genocide in South America, Depression and World War 2 look very mild by comparison. Simply put, we really do not want to return atmospheric conditions to those of the Cretaceous or Eocene, yet if humans continue to burn fossil fuels far into the future, and in the last year, our rate of output was the highest ever recorded, despite depressed global economic conditions, it is by no means impossible that we could push atmospheric carbon dioxide levels towards those seen during those epochs in the very long term. Of course, such a situation is unlikely, especially considering the disruption to industrial and economic activity that would occur should we see even one fifth of the above 170 metre rise in sea-level.

I came here to talk about methane, and the above is clearly off-topic. Yet it serves to demonstrate the degree to which climate models often limit their predictions to currently observable and measurable factors, ignoring many feedbacks that are less easy to measure accurately, sticking to methods that are sufficiently robust to make solid predictions, such as atmospheric carbon dioxide levels. They also tend to tell us about the next hundred years, and not the next thousand, which is equally relevant to the future of humanity and our ability to survive and thrive in a comfortable and stable environment. Such caution is good scientific practice, yet it leaves us with predictions that are almost certainly considerably below the likely more serious consequences of global warming.

One such unpredictable feedback is methane, and methane is a hell of a problem. Pound for pound methane is roughly twenty-two times worse than carbon dioxide as a greenhouse gas. When we think of methane’s role in global warming, we usually consider the flatulence of cattle. Meat production generally produces roughly 80% of all agricultural emissions globally – a figure which is bound to get worse as the rapidly expanding middle class across Asia in particular demands more protein. Livestock currently contribute roughly 20% of methane output, with the rest coming from rice production, landfill sites, coal mining, and as a bi-product of decomposition, particularly from methane-producing bacteria in places such as the Amazon and Congo basins. These are the measureable outputs included in most climate models, yet what the models do not include is the steady and rapid increase in methane release across the Arctic circle.

The Arctic circle is full of methane. Most of it is locked up in permafrost soils and seabed, though the gas has long been escaping through taliks, areas of unfrozen ground surrounded by permafrost. Global warming, however, has seen the most pronounced temperature increases at the poles, with a measured 2.5 degree average increase across the Arctic. As the region warms (current rates suggest a 10 degree temperature spike by the end of the century), as less ice forms, and as ocean temperatures in the region also rise, the until recently frozen seabed, more than 750 million square miles across this vast region, has slowly, but surely, begun to melt. An area of permafrost roughly one third this size, with equally intense concentrations of methane, also exists on land, mostly in far eastern Russia. This too has, in places, begun to thaw.

Lower-end estimates suggest that there is roughly 1400 gigatons of carbon locked up in the Arctic seabed. A release of merely 50 gigatons of methane would increase atmospheric methane levels twelve-fold. Presently, as Natalia Shakhova of the International Arctic Research Center, states,

“The amount of methane currently coming out of the East Siberian Arctic Shelf is comparable to the amount coming out of the entire world’s oceans. Subsea permafrost is losing its ability to be an impermeable cap.”

Much of the methane released is being absorbed by the ocean. In the area studied, more than 80% of deep water and more than half of the surface water had methane concentrations eight times higher than normal seawater. In some areas concentrations were considerably higher, reaching up to 250 times greater than background levels in summer, and 1400 times higher in winter. In shallower water, the methane has little time to oxidise and hence more of it escapes into the atmosphere.

Offshore drilling has revealed that the seabed in the region is dangerously close to thawing. The temperature of the seafloor was measured at between -1 and -1.5 degrees celsius within three to twelve miles of the coastline. Paul Overduin, a geophysicist at the Alfred Wegener Institute for Polar and Marine Research (AWI), speaking to Der Spiegal, stated that:

“If the Arctic Sea ice continues to recede and the shelf becomes ice-free for extended periods, then the water in these flat areas will get much warmer.”

More research is needed into the process and its possible long-term consequences. A sustained and intense release of methane would indeed have a significant impact on global warming, but at this stage it is difficult to be certain whether or not such will occur.

Natalia Shakhova remains cautious as to whether warming in the region will result in increased gradual emissions, or sudden, large-scale and potentially catastrophic releases of methane.

“No one can say right now whether that will take years, decades or hundreds of years.”

The threat, however, is very real. Previous studies showed that just 2% of global methane came from Arctic latitudes, yet with the recent rise in output, by 2007, the global methane contribution had risen to 7%. Atmospheric methane tends to linger in the atmosphere for ten years before reacting with hydroxyl radicals and breaking down into carbon dioxide. Yet in the case of ongoing large releases, the available hydroxyl might be swamped, allowing the methane to hang around for up to fifteen years. This would be an even more significant problem should rapid methane release be ongoing. Not only would the atmosphere’s ability to break down methane be significantly compromised, but the warming effect of the lasting methane presence would trigger further warming and thus further methane release. This is a classic case of a potentially dire environmental feedback, and it might be a very long time before we see the end of such a cycle should it commence. It is especially concerning when we take into account that the pre-requisites for triggering such an event might already be in place. Irrespective of how much humans cut emissions output, which, quite simply put, in real terms, they are not doing in the slightest, the trajectory of global temperature increase based on current greenhouse gas emissions is already sufficient to thaw the Arctic seabed eventually.

Still, there are too many variables and too much uncertainty about the scale and pace of this phenomenon and, for this reason, scientists are right to be cautious. Yet, when we consider that something as potent as this is not being included in climate models on account of its unpredictability, it reminds us how conservative and cautious those models really are and how dangerous our flirtation with heating the planet really is.

It would almost be fitting for humans, as decadent, indulgent and superfluous as they are, to drown in flatulence. It would make for an amusingly sarcastic take on history, written at the consequence end of the great and unfunny fart joke that is the Anthropocene epoch. Perhaps a thousand years from now, when humans, with their cockroach-like ability to adapt and survive in almost any environment, outdone for durability only by the bacteria they seem determined to hand the planet back to, have reconstructed their societies in a more sustainable manner on higher ground, they will look back and wonder why they had their priorities so utterly wrong for so long.

ps. Again, I apologise for lack of references. If you made it this far, no doubt you can do your own research into the matter. The purpose of this article is to be thought-provoking, not comprehensively informative. Good luck out there!

– P. Rollmops

You must be logged in to post a comment.